AI for HR People Who Don't Want to Sound Dumb in Meetings

A plain-English guide to the stuff that actually matters — no CS degree required.

Every Director of HR Technology knows the feeling.

Your inbox chimes. It's an email from your CHRO containing a link to the latest visionary article from a top-tier industry analyst, usually pitching some new "Expert HR AI."

The subject line is always the same: “Have you seen this? Why aren’t we doing this yet?”

I was explaining one of these massive AI platform announcements to my 11-year-old son, Justin, the other day (whilst getting my butt kicked at ping-pong). I told him they had loaded 25 years of HR research into an AI that could answer any policy question. He thought about it for five seconds and said:

"So it's basically the Wikipedia of work?"

Out of the mouths of babes. That is exactly what it is.

And it's why these articles make my blood boil. They sell the dream of frictionless AI but completely ignore the architectural plumbing required to make it actually execute tasks in Workday.

So let's fix that. Every major AI concept that matters for HR right now can be explained through the lens of an 11-year-old doing homework on a Sunday night (AKA - the Night of Tears and Regret). And if it can't survive that test, it's not worth your time.

Let’s get down to it.

The Large Language Model (The Kid)

Before we get into tools, let's start with the thing itself.

A large language model (LLM), like ChatGPT, Claude, or Gemini, is like Justin after 11 years of school, YouTube, and life. He's absorbed a ton of information. He can reason through problems. He can even surprise you with what he knows.

But he's working from memory. Sometimes that memory is sharp. Sometimes it's... creative. ("Dad, I'm pretty sure the Egyptians invented pizza.")

The model is the brain. Everything else we're about to talk about is how you make that brain useful in your world.

Prompt Engineering (The Homework Instructions)

Here's where most people start, and honestly, it's the highest-ROI concept on this list.

You know how if you tell Justin "do your project," you get a half-hearted paragraph and a shrug? But if you say "write two paragraphs about daily life in ancient Egypt, one about food and one about housing, with at least one specific detail in each" - Suddenly you get something you can put on the fridge?

That's prompt engineering. It's just being specific.

Tell an AI "write a job description," you get LinkedIn boilerplate. Tell it "Write a job description for a Workday Report Writer, emphasizing cross-functional collaboration and calculated fields, in a tone that's professional but not robotic" - Now we're cooking.

Most people blame the AI when they get bad output. Usually, it's the prompt.

RAG: Retrieval-Augmented Generation (The Study Guide)

Justin needs to write about Egypt, but his memory is fuzzy. So you go full research mode, highlight the good parts of a few articles, drop the stack on his desk, and say "here, work from this."

Now Justin can write something solid. But here's the catch: he can only work with what you gave him. If his teacher posted updated instructions to Google Classroom an hour ago, he doesn't know. He's working from a frozen-in-time stack of paper.

That's RAG. In the real world, RAG means you feed your company's actual documents (policies, SOPs, knowledge base articles) to the AI at query time. It doesn't hallucinate an answer. It pulls from your stuff.

This is what Justin called "the Wikipedia of work."

And when an analyst sells you a massive "Expert AI" for HR? (Ahem, Galileo) That's what they're selling you. A RAG model. It's study guide.

But it's read-only. It knows the answer. It can't press the buttons.

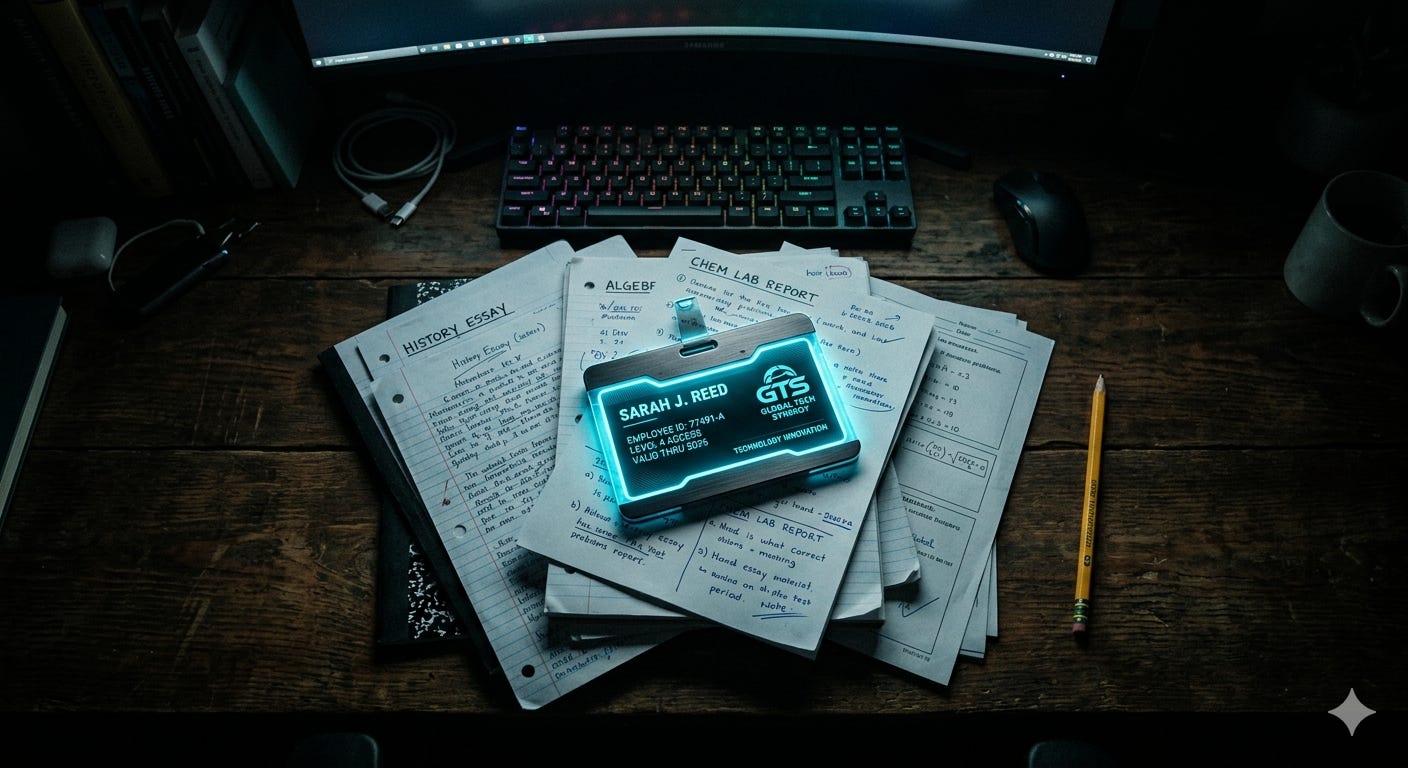

MCP: Model Context Protocol (The Badge and the Keys)

Same Sunday night. Same project. But instead of printing out articles, you take a different approach.

"Here's the login to Google Classroom. Here's the school library database. And here's your teacher's email if you need to ask a question."

Now Justin doesn't need you to pre-chew everything. He can see the latest assignment instructions himself. Check if the rubric changed. Search the library. Even email Mr. Hottenstein to clarify a question (shout out to Mr. Hottenstein, the GOAT). He's got live access to actual systems, and he can do things in them. Not just read stuff you handed him.

That's MCP.

MCP stands for Model Context Protocol. If you want another analogy, think of it as USB-C for AI. Remember when every phone had a different charger? MCP is the universal adapter; an open standard that gives AI a consistent way to plug into your tools. Your calendar. Your HRIS. Your ticketing system. Without someone building a custom integration for each one.

Where RAG gives AI a cheat sheet, MCP gives AI an employee badge.

RAG is read-only and point-in-time. MCP is live, and it goes both directions; the AI can read and write.

AI Agents (The Self-Directed Kid)

Now let's level Justin up.

Instead of you standing over his shoulder going "OK, now open the textbook... now write a topic sentence... now check the rubric..." Imagine Justin just handles it. He reads the assignment, makes a plan, looks up what he needs, writes a draft, checks it against the rubric, and revises. All on his own (AKA - Dad Nirvana). He might ask you a question along the way, but he's driving.

That's an AI agent. An LLM that can plan and execute multi-step tasks: not just answer a question, but figure out what steps are needed, use the tools available, and work through the problem.

In HR terms: asking "what's our PTO policy?" is RAG. Asking "look up my PTO balance in Workday" is MCP. But an agent handles the whole thing: "I need two weeks off in August. Check my Workday balance, find dates that don't conflict with my team's schedules, and draft the request for my manager's approval."

Plan. Research. Execute.

This is the direction everything is heading. And it's why MCP matters so much. Agents need secure, structured access to your systems to do anything useful. MCP is the infrastructure that makes that possible.

We're early. But not as early as you would think. This is live and in the wild, not yet everywhere, but we're seeing it. The groundwork you lay now (clean data, solid integrations, well-defined business processes) is what determines whether your org is ready when agents show up for real.

The Cheat Sheet

Because I know you skimmed. (It's fine. I did too.)

(Format: Concept | Justin Analogy → HR Example)

LLM | The kid (smart but working from memory) → The AI itself: Claude, ChatGPT, etc.

Prompting | Clear homework instructions → Writing better queries to get better output

RAG | The highlighted study guide → AI that answers from your actual policy docs

MCP | Login access to school systems → AI that connects to Workday/ServiceNow to take action

Agents | Doing the project end-to-end → AI that handles multi-step workflows autonomously

How to Answer Your CHRO

You don't need a CS degree to make good decisions about AI in HR. But you do need this vocabulary, because the vendors are coming, the RFPs are coming, and you do not want to get swindled by someone selling a RAG model disguised as an agent.

The next time an executive forwards you an analyst's hype piece and asks "Why aren't we doing this?", you have your answer:

"The tool in this article is a RAG model, a great study guide, but it doesn't execute business processes in Workday. Our primary mandate right now is fixing our foundational data so we can eventually deploy true agentic AI that actually removes friction for our managers. Buying another study guide won't fix the workflow."

Let the analysts write the encyclopedias. We have architecture to build.

This is the first piece in a series on how the Department of First Things First actually uses AI in its workflow. Not theory! The real, messy, practical version. If you want to follow along, subscribe and I'll see you next week.

PS- If this was useful, share it with someone in your org who keeps nodding along in AI conversations but definitely Googles everything afterward. We've all been there.

PPS- I have just received a directive from Justin's agent (his mother) that in order for Justin to maintain his position as Chief UX Tester at the Department of First Things First, I am hereby required to refer to him as "the nearly 12-year-old." I have acquiesced to this demand without negotiation. Some agents don't need MCP to execute.

— Mike

I appreciate your explanation of the different terms for beginners. Question for you and other readers: how do you feel about the ethics of using AI agents to promote your articles on substack and comment on others posts without sharing that it's an Ai agent? Someone sent their agent to comment on my post about AI and I was engaging in got faith till I noticed a pattern in their responses and called out what I was observing.