Josh Bersin Is Right at 30,000 Feet. I Work on the Ground.

A practitioner's rebuttal to the analyst's skybox.

tldr; Josh Bersin published a piece this week on substack arguing that AI layoffs are overhyped and that AI transformation is about quality and scale, not job elimination. He's not wrong. But he's writing from the analyst's skybox, and some of his claims (particularly around bias and supervision) are dangerously incomplete when you're the person actually wiring this stuff into an enterprise ERP. Here's the view from the tenant.

Josh Bersin dropped a piece this week called "Layoffs at Atlassian, Block, Amazon are Misleading. AI Alone Is Not The Story."

I read it twice. The first time I nodded along. The second time I started highlighting the parts that made my eye twitch.

And look; I want to be clear about something. I'm not here to dunk on Josh Bersin. The man has forgotten more about HR than most of us will ever learn. His "Talent Density" framing is genuinely useful. His point that companies overhire during growth and then blame AI for the correction? Dead on. That's not an AI story. That's a management story wearing an AI costume.

(It's like when your kid breaks a lamp and blames the dog. The dog was in the room, sure. But the dog didn't throw the football.)

Where it gets complicated is when Josh moves from macro-level workforce commentary into the specifics of how AI actually works inside a recruiting stack. Because that's where the altitude starts to show.

The Bias Blind Spot

Here's the line that stopped me cold:

Josh writes that AI recruiting tools "reduce bias (they don't care what someone looks like or their college degree, unless you tell them to)."

Hang out with that for a second.

This framing treats AI bias like a settings toggle. Like somewhere in the admin console there's a checkbox that says ☑️ Be Racist and as long as you don't click it, you're fine.

That's not how this works. That's not how any of this works.

Agentic AI doesn't need to be told to discriminate. It correlates patterns. If your historical hiring data shows that candidates from certain zip codes, with certain speech cadences, or with gaps in their resume tend to get screened out — the AI will learn that pattern and execute it at scale. Not because anyone told it to. Because that's what the data said to do.

A biased human recruiter might impact a dozen reqs. A biased autonomous agent will flawlessly execute that parameter across 10,000 applications before your analytics team has their morning coffee.

Josh is right that AI doesn't care what someone looks like. But it absolutely cares about every proxy variable that correlates with what someone looks like. And at enterprise scale, that distinction isn't academic. It's a lawsuit.

The Supervision Myth

Josh cites the Amazon VP story — where an AI executive eliminated 16,000 positions in a single quarter and then had to rehire people to supervise the AI when bug rates spiked and escalations piled up. Here's his Twitter/X post:

Josh presents this as evidence that humans remain essential. Which, yes. Obviously. But he breezes past the part that should terrify every HR technology leader reading his newsletter: they built the plane while flying it and had to rehire the mechanics mid-flight.

That's not a success story. That's a governance failure with a happy-ish ending.

And then Josh says the quiet part: in recruiting, the roles that get eliminated aren't the "high-value recruiters" but the interview schedulers, job posting admins, and advertising coordinators.

He frames this casually. Like those roles are just friction to be automated away. But those roles are also the humans who catch errors. The scheduler who notices the interview is booked for a location that closed last week. The admin who flags that a job posting has the wrong salary band. The coordinator who realizes the AI sent a confirmation to a candidate whose application was actually rejected.

When you remove the human middleware and replace it with an autonomous agent, you'd better have governance in place…because no one is catching the 2 AM mistakes anymore.

(I wrote about this yesterday in The Agentic Leash. I call it Schrödinger's Candidate. The AI tells the applicant they have an interview Tuesday at 9 AM. The API drops the payload. The store manager's dashboard is empty. The candidate exists and doesn't exist at the same time. It's fun as a thought experiment and deeply terrifying as a Tuesday morning in your Workday tenant.)

The Justin Postulate

Justin (11) has become the unofficial UX tester for this newsletter. I run things by him not because he understands enterprise architecture, but because he has the attention span and patience level of the exact demographic these AI tools are trying to hire.

So I explained the Bersin thesis to him at dinner. I said: "A really smart guy says AI won't replace recruiters, it'll just make them faster and better."

Justin thought about it for a second and said: "So it's like giving a kid a power washer. It cleans the walkway way faster. But if nobody's watching, he's definitely going to spray the dog."

I don't have a rebuttal for that. I think that's the whole article.

(Some context: Justin earned $20 power washing our front walkway last month before the cold snap. If anyone is interested in the Ring video evidence, DM me.)

The Ground-Level Truth

Here's what I agree with Josh on: AI transformation is primarily about quality and scale, not mass job elimination. The McDonald's and 7-Eleven time-to-hire numbers are real. The productivity gains are real. I just spent a week at IAMPHENOM watching demos that genuinely impressed me. This technology is necessary and it's coming whether we're ready or not.

But "the jobs don't go away, they just change" is an analyst's framing. On the ground, what's actually happening is that the risk profile of those jobs is changing dramatically. We're not replacing recruiters with robots. We're replacing guardrails with algorithms and hoping the algorithms don't spray the dog.

Josh says the ROI isn't about laying off recruiters. He's right. The ROI is about hiring faster, staffing better, and improving quality. But the ROI collapses the moment your autonomous agent hallucinates a sign-on bonus, introduces bias at scale, or creates a candidate that exists in the AI's memory but is invisible to your ERP.

The vendors are building the AI. Josh is narrating the transformation from the skybox.

Some of us are down here building the leash.

— Mike | The Department of First Things First

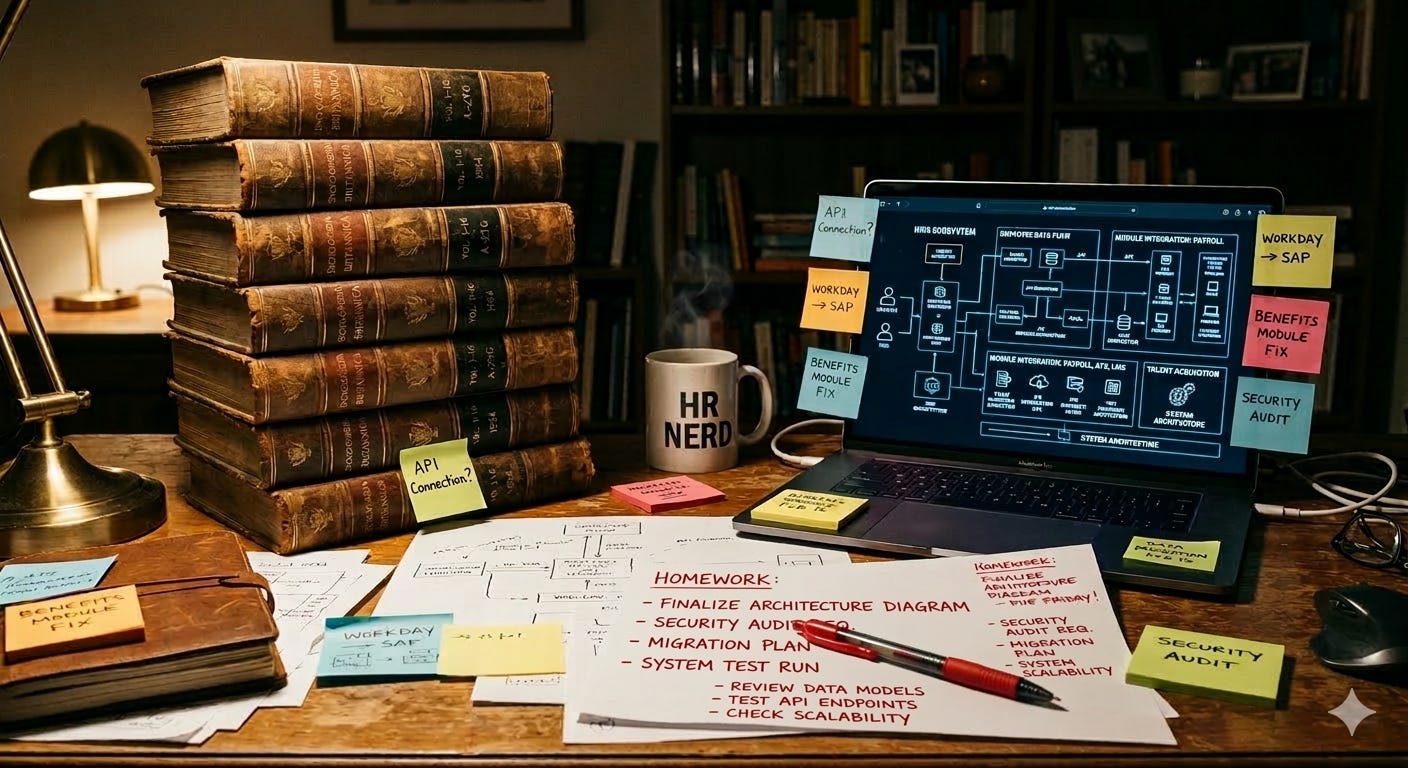

P.S. — I told Justin that Josh Bersin has been studying HR longer than I've been alive. Justin said, "So he's like the Wikipedia of work?" Not inaccurate, kiddo. But sometimes you need someone who's actually done the homework, not just read the encyclopedia.