Josh Bersin Wants to Teach You AI Literacy. I Want to Show You Mine.

Part 3 of the "How the Department of First Things First Actually Uses AI" Series.

Find my prior posts on this topic here: Part 1, Part 2

tl;dr: Josh Bersin released a podcast about AI literacy last week. It’s fourteen minutes of anxiety creation and product placement. His core argument — “AI learns from language, so be logical” — isn’t wrong, it’s just useless for practitioners. Real AI literacy for HR tech people isn’t about prompting or vocabulary. It’s about governance: who holds the leash on AI agents, what happens when they act on employee data, and how the requirements scale dramatically across Workday Illuminate’s tiers (Accelerate → Assist → Transform).

Josh Bersin dropped a podcast last week called “What Does AI Literacy Really Mean?”

Fourteen minutes long. And in those fourteen minutes, he managed to identify a problem, create anxiety about it, and sell you the cure. It’s a clean structure. The kind you’d respect if it weren’t aimed directly at your budget.

I’m not saying AI literacy isn’t important. It is. But when an analyst defines the disease and sells the medicine, you’re allowed to ask for a second opinion.

Here’s mine.

“AI Learns From Language” Is Not a Framework

Bersin’s big reveal is that AI learns from language, so you need to be able to put your needs into “logical statements” to get the most out of it.

That’s like telling someone learning to drive that cars run on gasoline. True. Not particularly useful when you’re trying to parallel park.

You know what AI literacy actually looks like for the people reading this newsletter? It looks like understanding why the same prompt gets wildly different results depending on how much context you give it. It looks like knowing what RAG is — not because you need to build one, but because your vendor is about to sit across from you and say their product “uses RAG” with the confidence of a sophomore who Wikipedia’d the topic ten minutes before the presentation. You need to know whether that’s meaningful or marketing.

It looks like understanding the difference between a model suggesting something and a model doing something. Which (and I really need everyone to internalize this) is the difference between “helpful tool” and “thing that just changed an employee’s compensation while you were in a meeting about change management.”

That’s not “be more articulate.” That’s architecture.

Bersin doesn’t go there. And to be fair, that’s not really his audience. His audience is buying. This newsletter’s audience is building. The advice that works at 30,000 feet can be actively misleading at ground level.

The Grocery List Is Not a Meal

In his podcast notes, Bersin name-drops bias, risk, auditability, data quality, and tuning. He even throws in a note about “vibe coding” being more complex than people think.

Cool. That’s a grocery list.

You don’t hand someone a list that says “chicken, rice, lemon, garlic” and call yourself a cooking instructor.

Who owns bias review when an AI suggests a compensation adjustment? What’s your escalation path when an agent takes an action on employee data that doesn’t match your business rules? What do you do on the Monday morning when someone in legal calls because an AI-driven recommendation created a disparate impact pattern that nobody caught because nobody was assigned to catch it?

These aren’t abstract questions. They’re Tuesday. They’re the actual work. And no podcast or newsletter (mine included) answers them for you. The difference is I’ll tell you that upfront instead of pointing you toward a subscription product.

So What Does AI Literacy Actually Look Like?

It looks like governance.

I know. I’m sorry. I just said the word “governance” and half of you felt your soul leave your body. Stay with me.

Not the “let’s draft an AI ethics statement and put it on the intranet where it can live next to the recycling policy and the 2019 holiday party photos” kind.

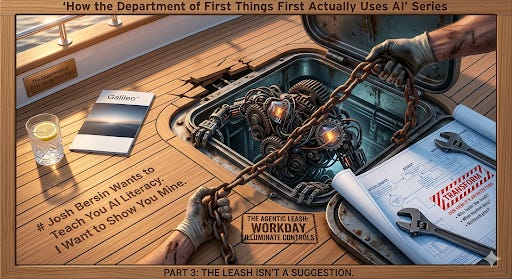

The kind where you decide (before you turn anything on) who holds the leash, how long it is, and what happens when the dog runs into traffic. Because the dog will run into traffic. The dog always sees a squirrel named “efficiency” and just goes.

I wrote about this in “The Agentic Leash” a few weeks ago, and the response told me something: practitioners are hungry for this conversation. Not the theoretical version. The operational one.

So let’s get operational.

The Tiers Are Not Created Equal

If you’re in the Workday ecosystem (and if you’re reading this, there’s a solid chance you are), you already know about Illuminate. What you might not have thought about is how dramatically the governance requirements change as you move through the tiers.

Accelerate is the easy one. Embedded AI. Summarization, natural language search, smart suggestions that surface information. Low risk. The AI is basically a really enthusiastic research assistant who never sleeps and never asks for PTO. You still make every decision. Governance here is mostly “did we turn it on?” and “does our security model still make sense?” This is the tier where everyone feels smart. Enjoy it.

Assist is where it gets interesting. The AI starts making recommendations that humans act on. Job matching. Compensation suggestions. Talent pipeline insights. The AI is no longer just surfacing information — it’s interpreting it. And the human on the other end might not know how to evaluate whether the interpretation is any good.

That’s where literacy matters. Not “can you prompt well?” but “do you understand what you’re looking at well enough to push back on the machine?” Because here’s the thing about humans: we are phenomenal at deferring to something that sounds confident. The AI gives you a recommendation with a little percentage next to it and suddenly everyone in the room is nodding like it came down from a mountain on a stone tablet.

Transform is the one that keeps me up at night. This is where agents take action. Not suggest action. Take action. And the governance model for that is fundamentally different from anything most HR technology teams have ever had to build.

Who approved the model’s logic? Who reviews the output before it hits a manager’s inbox? What if it’s wrong? What if it’s right but the business context changed and nobody told the model? What’s the rollback plan?

These questions don’t show up in a 14-minute podcast about AI literacy. But they’re going to show up in your implementation, whether you’re ready for them or not.

A Scenario, Because I Know How We Learn

Let’s say your Workday environment is running an AI agent (Transform tier) that analyzes talent pipeline data and suggests changes to open job requisitions. Adjusted job descriptions. Revised compensation ranges. Maybe even suggested changes to hiring criteria based on what’s working in similar roles.

Sounds amazing. Sounds like the future.

Now: who approved the logic the model used to decide what “working” means? Is it optimizing for time-to-fill? Quality of hire? Retention at 12 months? All three? Because those optimization targets can conflict with each other, and the model picked one. Or blended them. And someone in your organization needs to know which, and why, and whether that aligns with what your CHRO actually wants (or what they said they wanted six months ago before the reorg).

The output lands in a recruiter’s queue. It says “adjust the comp range for this req from $85K–$95K to $92K–$105K based on market data and pipeline conversion rates.” The recruiter sees an AI-generated recommendation and thinks, “Cool, the system says so.” They adjust it. The hiring manager approves it. Nobody interrogated it.

Nobody asked: what market data? What pipeline? Over what time period?

Six months later, you’ve got a comp equity issue that nobody can trace because it started with an AI recommendation that twenty people approved by not questioning it.

That’s not an AI failure. That’s a literacy failure. And it’s a governance failure. Because nobody defined who’s supposed to ask those questions before the recommendation goes live.

This is what AI literacy looks like in practice. It’s not prompting. It’s not “being logical.” It’s understanding the system well enough to know when to trust it, when to question it, and when to shut it off and figure out what went wrong.

The Encyclopedia vs. The Architecture

Bersin will keep writing the encyclopedias. There’s value in that. The categories, the frameworks, the market maps…they serve an audience. He’s earned that lane.

But if you’re the person who has to actually build the thing? Configure the tenant? Explain to your VP why the AI did what it did? Stand in front of a governance committee and say “here’s how we control this” while someone from legal stares at you like you just described a haunted house?

You don’t need an encyclopedia. You need architecture.

AI literacy for practitioners isn’t a course you take or a product you subscribe to. It’s a muscle you build. It’s knowing your platform, knowing your data, and knowing who’s accountable when the machine makes a move.

Next week: what happens when you actually let the agent off the leash. Part 4 goes deeper on the governance operating model: who owns what, how to build the review cadence, and why your current change management process is going to look at agentic AI and simply burst into flames.

If this is the kind of thing that makes you nod at your screen in a way that concerns your coworkers, please subscribe. And if you know someone who’s building, not just buying, share this with them. Forward the email. Post the link. Do that thing where you screenshot a paragraph and put it on LinkedIn with a “THIS 👆” caption. I won’t judge.

(I will judge a little.)

— Mike

The Department of First Things First. For the people who do the work.

P.S. Justin just wrapped up travel 14U volleyball season, where the gym acoustics are specifically engineered to ensure no parent retains their hearing by age fifty. And now we’re rolling straight into spring tennis tournament season, where I get to watch twelve-year-olds make line calls that would get a FIFA referee fired. USTA rules: on the line is out, inside the line is questionable, and clearly in is “I didn’t see it.” Governance starts at home, people.